Edited screengrab from Collabspace YouTube video.

Over the past few years, I have worked on several projects to migrate physical records data from one system to another, in this case, using Collabware's Collabspace SaaS solution for physical records. This migrated data includes information about the boxes or folders, box numbers and bar code values, titles, descriptions, storage locations, open/close dates etc. Microsoft Excel is a great tool that can assist in this process, but using it for this purpose has some ‘gotchas’ that I want to share with you, along with a checklist to consider when migrating your physical records.

In this article, I will cover...

- a common approach for migrating physical records,

- how to transform data and mitigate risk of error and

- 6 factors to consider (gotchas) if using Excel for your migration.

Common Approach for Physical Records Migration: Export, Transform, Load

A common approach to migrating physical records data is to use an Export, Transform, Load approach similar to what is used in data warehouse solutions. Data is exported from the original system into text, CSV, or Excel files. This is done by the people who know that system best or the IT area that supported it. These exported physical records data can then be reviewed, adjusted and transformed into data files suitable for import into the target system.

There are often situations where data in the source system was 'not quite right' in a few situations, or the customer wanted to change some things. For example, the original system may have had incorrect data for certain sets of records. This transformation process during the migration is a perfect opportunity to then make those corrections and get the data in the new system cleaned up, improved and more usable and searchable. Once transformed, the data can then be imported into the target system.

Transforming Data and Mitigating Risk of Error

How you view and transform this data is going to depend on the volume of data. In my experience, if you have fewer than 500,000 or so records, Microsoft Excel will likely be the tool to work with. Most people on the team will already have it, and its features for manipulating data and saving into different formats are extensive and flexible.

Using this manual method of transformation does introduce the potential for human error, however, many migration budgets cannot afford the development of a fully automated process so they can instead mitigate risk of manual updates with before and after audit counts and by using tools like 'Beyond Compare' to compare before and after files to ensure only the desired changes have taken place.

If you choose to use Excel, here are a few things you need to watch out for...

6 Factors to Consider if Using Excel:

1. Carriage Return (CR) and Line Feed (LF) Characters

Certain text fields in the original source system may have values that include carriage returns (CR) or line feeds (LF). These will wreak havoc on the CSV file data because instead of getting a nice row of data for each record, rows will be split at the CR/LF and the import process will not be able to properly interpret the data. These are hard to catch because they are non-visible characters and may only show up in a few situations.

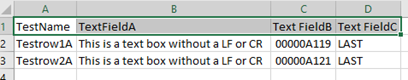

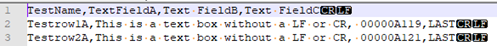

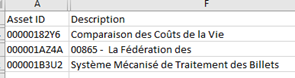

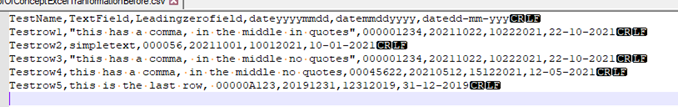

Here is an example, below, to demonstrate the issue. The first scenario is an Excel file with no line feeds or carriage returns imbedded in the data.

The Excel file looks fine. When saved a CSV and we look at the file with a text editor like Notepad or Notepad++, we see a single row for each record with the line feed (LF) at the end as it should be.

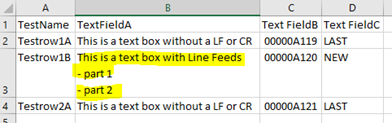

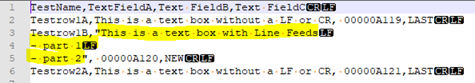

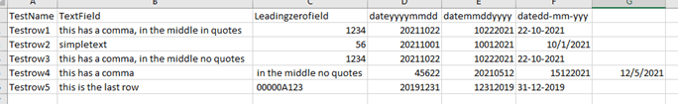

However, if we now insert a new row into the Excel file that includes imbedded line feeds in one of the cells (you can do this by editing the cell and pressing Alt+Enter), the cell value will be nicely formatted.

However, when we look at the saved CSV file, we now have 6 rows instead of the 4 we were expecting and using this file to import records to the target system will fail.

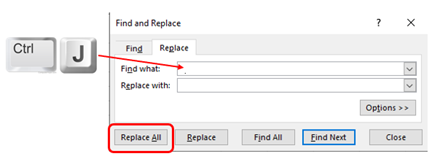

The quickest way to deal with this situation is to include a step in your transformation process to search all export files for carriage returns and line feeds characters and remove or replace them. To do this in Excel, use the Replace All feature. Type CTRL+J into the Find box and leave the Replace With field empty. Replace all will then remove all these characters.

If the carriage returns or linefeeds within a multi-line field need to be maintained in the target system, then a more sophisticated transformation technique will need to be considered.

2. Commas in text or description fields

Of course, if the source data contains commas in some of its text or description fields, you need to account for this. Most 'save as csv' functions will automatically put double quotes around values containing columns and this is correctly interpreted by Excel and other tools that support CSV files. However, some exports may be database-level query results exported as txt files, and perhaps only saved with a CSV file type. In these situations, you could have 'unquoted' comma text in your extracted data.

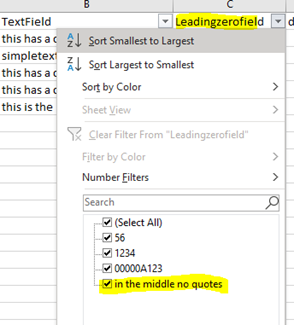

One way to easily tell if there are commas throwing things off is to turn on the Excel column filters on all the columns and see if there are any values in columns beyond what you expect to be the last column containing data. Displaying the filter drop down values will also sometimes highlight values that don't seem to belong. In the example here, you can see that text values are showing up in a column where the do not belong.

One way to easily tell if there are commas throwing things off is to turn on the Excel column filters on all the columns and see if there are any values in columns beyond what you expect to be the last column containing data. Displaying the filter drop down values will also sometimes highlight values that don't seem to belong. In the example here, you can see that text values are showing up in a column where the do not belong.

Search for commas in the exported data, ensure they have double quotes around the entire text of that field. If not, see if the export process can support the quoted values. If you are stuck with it, you can either replace them all with another character (space, period or bar). Alternatively, replace them temporality, then use Excel skills to append the values in that column with quotes, then revert that character back to a comma.

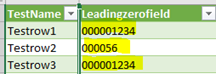

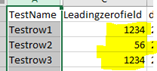

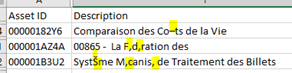

3. Numeric values with leading zeros

Excel does not seem to like zeros, especially leading zeros for numeric data. If you use Excel to open a CSV file containing a numeric data with leading zeros, Excel will automatically interpret it as a number field and suppress the leading zeros. And if you have autosave on, your original CSV File will then lose all those leading zeros automatically, without you intending to make any changes.

Correct Values: Incorrect Values:

One way to deal with this is to use the Excel>Data>Import CSV/Text function to import data from the source CSV instead of opening the CSV file directly.

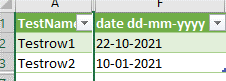

4. Special Characters and Language

When source data contains non-English text or special characters, there may be characters that are not properly represented in the CSV file if the CSV file encoding is not correct, or if the CSV file is created with just the default encoding at the time.

Correct Values: Incorrect Values:

Check the data in the source, and if there are characters from other languages present, find the appropriate UTF encoding that will support that character. We have found that saving as UTF-8 encoded CSV files will do a decent job, so when exporting data from the source system, make sure they provide the data using CSV encoding that supports the full character set you are working with.

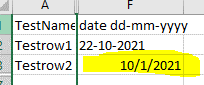

5. Date Fields

Excel likes to present date columns in certain formats. It tries to be smart and if it thinks it sees something that looks like a date, it will format it to match your regional settings. The issue is that this may have the effect of introducing date formatting characters that are unwanted, or swapping the meaning of what is a month or day value around. If excel then reformats the entire column in its 'best guess' date format, then you could have a mess on your hands.

Correct Values: Incorrect Values:

One way to address this is to use date values without formatting characters. However, if the target imports require formatting characters, then avoid opening the CSV file directly with Excel. Instead, use the Data>Import from Text/CSV option but choose "Data Type Detection" = "Do not detect data types" when you import the data. In this way, you can then force Excel to treat all the columns as text and you can keep control over the formatting of your date values.

6. Turn off Excel Autosave

With Autosave enabled, and Excel’s autoformatting tendencies, you can get into trouble and end up with corrupted data. Disable Autosave if you want to maintain control over your CSV file data formats.

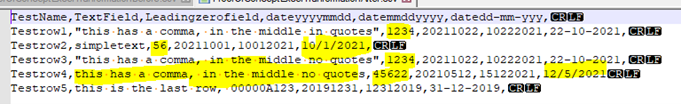

Here is an example of what could happen to you with Excel Autosave enabled:

Original File

In Excel with a simple double click to open the CSV file

After Excel Autosave

After the Autosave, leading zeros have been lost on certain fields and some date fields now have different characters resulting in inconsistent formats of dates in the same column.

Final Thoughts:

- Make sure you have a good txt file viewing tool like Notepad++. There may even be an add-in to allow viewing CSV files in a grid view for some of these tools.

- Use the Import CSV/TXT file feature of Excel

- Open Excel first and then choose the Data>Import TXT/CVS file in order to bring the data into Excel.

- Excel will create a new workbook and leave the original untouched. During the import you can then set the specific formats for each column. I found that setting all columns to text might be the safest bet.

- Once the CSV data is in Excel with all the columns set to txt, you can then save a working file in Excel, complete your data reviews, data adjustments and once the transformation process is complete, save back to a CSV file.

- Use tab delimited txt files instead of CSVs

- When Excel opens a txt file, it will automatically go into Import mode where you can avoid the data type detection issues with few clicks.

- The use of commas in multiline text is common. By using tab delimited formatted txt files, you avoid the imbedded comma's issue completely.

Microsoft Excel has been a great tool for use in the transformation steps in my physical records data migration projects. Be aware of the 'Gotcha's!' above and use the power of Excel formulas and lookups to get the data the way you want before importing into Collabspace or sending it onto its destination.

We hope this article was helpful as you migrate your physical records! We've also got articles on how to modernize your physical records management, and tips for migrating to the Cloud. Please contact us if you have any questions, or listen to our webinar recording on managing physical records in M365(?), free to access: